Time Travel snowflake: The Ultimate Guide to Understand, Use & Get Started 101

By: Harsh Varshney Published: January 13, 2022

Related Articles

To empower your business decisions with data, you need Real-Time High-Quality data from all of your data sources in a central repository. Traditional On-Premise Data Warehouse solutions have limited Scalability and Performance , and they require constant maintenance. Snowflake is a more Cost-Effective and Instantly Scalable solution with industry-leading Query Performance. It’s a one-stop-shop for Cloud Data Warehousing and Analytics, with full SQL support for Data Analysis and Transformations. One of the highlighting features of Snowflake is Snowflake Time Travel.

Table of Contents

Snowflake Time Travel allows you to access Historical Data (that is, data that has been updated or removed) at any point in time. It is an effective tool for doing the following tasks:

- Restoring Data-Related Objects (Tables, Schemas, and Databases) that may have been removed by accident or on purpose.

- Duplicating and Backing up Data from previous periods of time.

- Analyzing Data Manipulation and Consumption over a set period of time.

In this article, you will learn everything about Snowflake Time Travel along with the process which you might want to carry out while using it with simple SQL code to make the process run smoothly.

What is Snowflake?

Snowflake is the world’s first Cloud Data Warehouse solution, built on the customer’s preferred Cloud Provider’s infrastructure (AWS, Azure, or GCP) . Snowflake (SnowSQL) adheres to the ANSI Standard and includes typical Analytics and Windowing Capabilities. There are some differences in Snowflake’s syntax, but there are also some parallels.

Snowflake’s integrated development environment (IDE) is totally Web-based . Visit XXXXXXXX.us-east-1.snowflakecomputing.com. You’ll be sent to the primary Online GUI , which works as an IDE, where you can begin interacting with your Data Assets after logging in. Each query tab in the Snowflake interface is referred to as a “ Worksheet ” for simplicity. These “ Worksheets ,” like the tab history function, are automatically saved and can be viewed at any time.

Key Features of Snowflake

- Query Optimization: By using Clustering and Partitioning, Snowflake may optimize a query on its own. With Snowflake, Query Optimization isn’t something to be concerned about.

- Secure Data Sharing: Data can be exchanged securely from one account to another using Snowflake Database Tables, Views, and UDFs.

- Support for File Formats: JSON, Avro, ORC, Parquet, and XML are all Semi-Structured data formats that Snowflake can import. It has a VARIANT column type that lets you store Semi-Structured data.

- Caching: Snowflake has a caching strategy that allows the results of the same query to be quickly returned from the cache when the query is repeated. Snowflake uses permanent (during the session) query results to avoid regenerating the report when nothing has changed.

- SQL and Standard Support: Snowflake offers both standard and extended SQL support, as well as Advanced SQL features such as Merge, Lateral View, Statistical Functions, and many others.

- Fault Resistant: Snowflake provides exceptional fault-tolerant capabilities to recover the Snowflake object in the event of a failure (tables, views, database, schema, and so on).

To get further information check out the official website here .

What is Snowflake Time Travel Feature?

Snowflake Time Travel is an interesting tool that allows you to access data from any point in the past. For example, if you have an Employee table, and you inadvertently delete it, you can utilize Time Travel to go back 5 minutes and retrieve the data. Snowflake Time Travel allows you to Access Historical Data (that is, data that has been updated or removed) at any point in time. It is an effective tool for doing the following tasks:

- Query Data that has been changed or deleted in the past.

- Make clones of complete Tables, Schemas, and Databases at or before certain dates.

- Tables, Schemas, and Databases that have been deleted should be restored.

As the ability of businesses to collect data explodes, data teams have a crucial role to play in fueling data-driven decisions. Yet, they struggle to consolidate the data scattered across sources into their warehouse to build a single source of truth. Broken pipelines, data quality issues, bugs and errors, and lack of control and visibility over the data flow make data integration a nightmare.

1000+ data teams rely on Hevo’s Data Pipeline Platform to integrate data from over 150+ sources in a matter of minutes. Billions of data events from sources as varied as SaaS apps, Databases, File Storage and Streaming sources can be replicated in near real-time with Hevo’s fault-tolerant architecture. What’s more – Hevo puts complete control in the hands of data teams with intuitive dashboards for pipeline monitoring, auto-schema management, custom ingestion/loading schedules.

All of this combined with transparent pricing and 24×7 support makes us the most loved data pipeline software on review sites.

Take our 14-day free trial to experience a better way to manage data pipelines.

How to Enable & Disable Snowflake Time Travel Feature?

1) enable snowflake time travel.

To enable Snowflake Time Travel, no chores are necessary. It is turned on by default, with a one-day retention period . However, if you want to configure Longer Data Retention Periods of up to 90 days for Databases, Schemas, and Tables, you’ll need to upgrade to Snowflake Enterprise Edition. Please keep in mind that lengthier Data Retention necessitates more storage, which will be reflected in your monthly Storage Fees. See Storage Costs for Time Travel and Fail-safe for further information on storage fees.

For Snowflake Time Travel, the example below builds a table with 90 days of retention.

To shorten the retention term for a certain table, the below query can be used.

2) Disable Snowflake Time Travel

Snowflake Time Travel cannot be turned off for an account, but it can be turned off for individual Databases, Schemas, and Tables by setting the object’s DATA_RETENTION_TIME_IN_DAYS to 0.

Users with the ACCOUNTADMIN role can also set DATA_RETENTION_TIME_IN_DAYS to 0 at the account level, which means that by default, all Databases (and, by extension, all Schemas and Tables) created in the account have no retention period. However, this default can be overridden at any time for any Database, Schema, or Table.

3) What are Data Retention Periods?

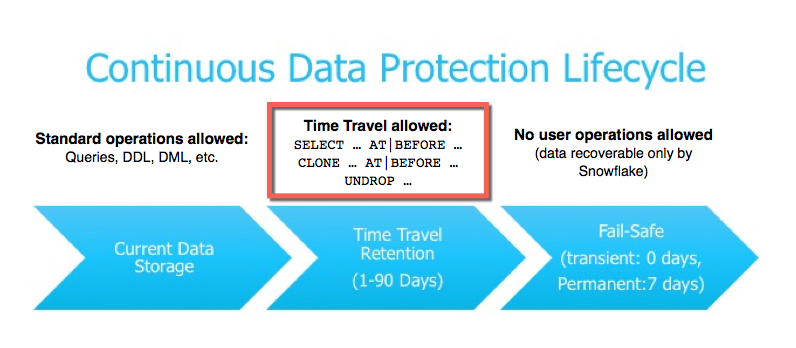

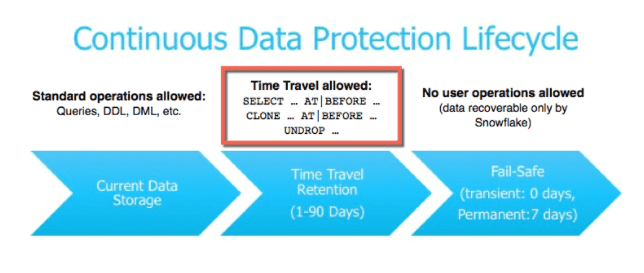

Data Retention Time is an important part of Snowflake Time Travel. Snowflake preserves the state of the data before the update when data in a table is modified, such as deletion of data or removing an object containing data. The Data Retention Period sets the number of days that this historical data will be stored, allowing Time Travel operations ( SELECT, CREATE… CLONE, UNDROP ) to be performed on it.

All Snowflake Accounts have a standard retention duration of one day (24 hours) , which is automatically enabled:

- At the account and object level in Snowflake Standard Edition , the Retention Period can be adjusted to 0 (or unset to the default of 1 day) (i.e. Databases, Schemas, and Tables).

- The Retention Period can be set to 0 for temporary Databases, Schemas, and Tables (or unset back to the default of 1 day ). The same can be said of Temporary Tables.

- The Retention Time for permanent Databases, Schemas, and Tables can be configured to any number between 0 and 90 days .

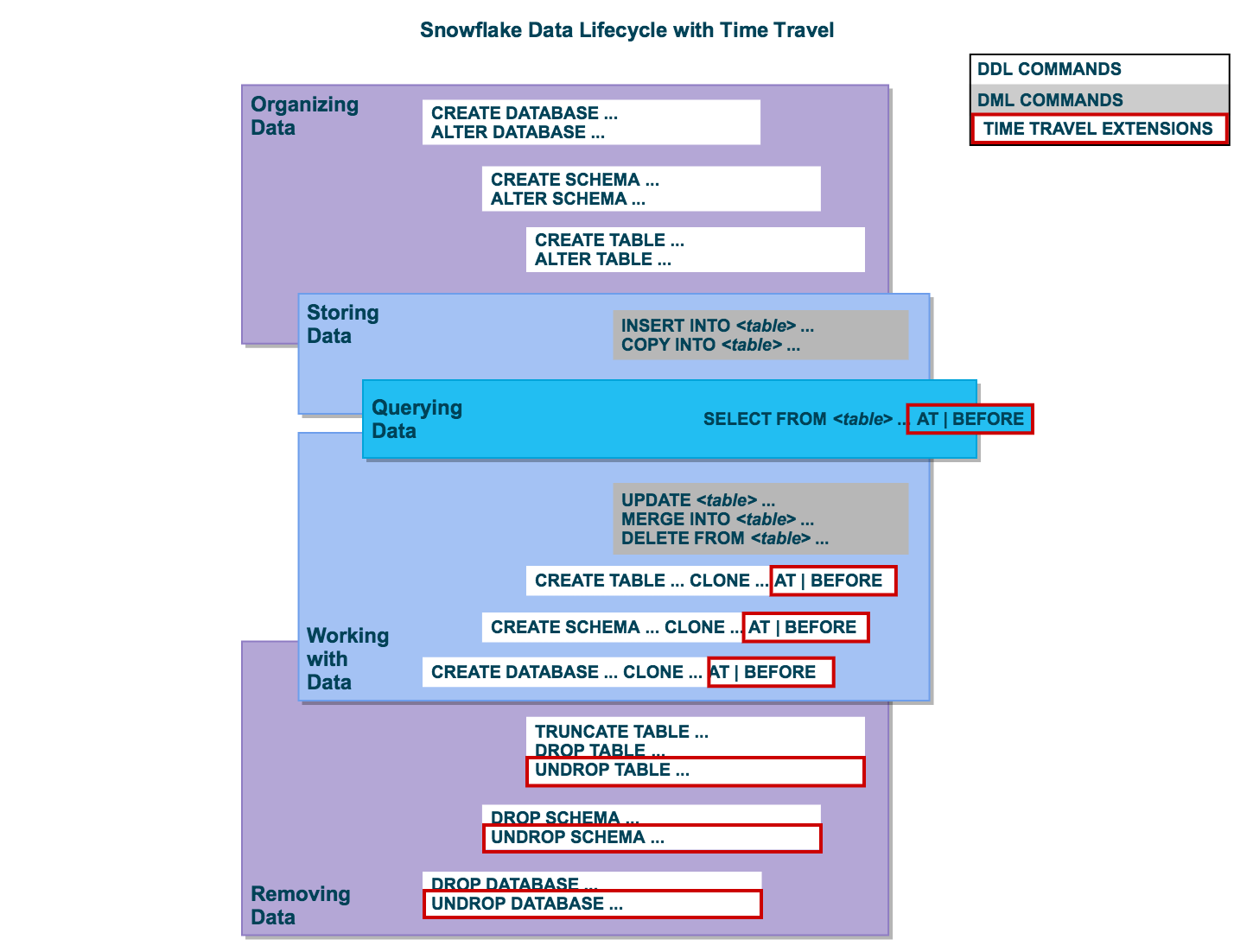

4) What are Snowflake Time Travel SQL Extensions?

The following SQL extensions have been added to facilitate Snowflake Time Travel:

- OFFSET (time difference in seconds from the present time)

- STATEMENT (identifier for statement, e.g. query ID)

- For Tables, Schemas, and Databases, use the UNDROP command.

How Many Days Does Snowflake Time Travel Work?

How to specify a custom data retention period for snowflake time travel .

The maximum Retention Time in Standard Edition is set to 1 day by default (i.e. one 24 hour period). The default for your account in Snowflake Enterprise Edition (and higher) can be set to any value up to 90 days :

- The account default can be modified using the DATA_RETENTION_TIME IN_DAYS argument in the command when creating a Table, Schema, or Database.

- If a Database or Schema has a Retention Period , that duration is inherited by default for all objects created in the Database/Schema.

The Data Retention Time can be set in the way it has been set in the example below.

Using manual scripts and custom code to move data into the warehouse is cumbersome. Frequent breakages, pipeline errors and lack of data flow monitoring makes scaling such a system a nightmare. Hevo’s reliable data pipeline platform enables you to set up zero-code and zero-maintenance data pipelines that just work.

- Reliability at Scale : With Hevo, you get a world-class fault-tolerant architecture that scales with zero data loss and low latency.

- Monitoring and Observability : Monitor pipeline health with intuitive dashboards that reveal every stat of pipeline and data flow. Bring real-time visibility into your ELT with Alerts and Activity Logs

- Stay in Total Control : When automation isn’t enough, Hevo offers flexibility – data ingestion modes, ingestion, and load frequency, JSON parsing, destination workbench, custom schema management, and much more – for you to have total control.

- Auto-Schema Management : Correcting improper schema after the data is loaded into your warehouse is challenging. Hevo automatically maps source schema with destination warehouse so that you don’t face the pain of schema errors.

- 24×7 Customer Support : With Hevo you get more than just a platform, you get a partner for your pipelines. Discover peace with round the clock “Live Chat” within the platform. What’s more, you get 24×7 support even during the 14-day full-feature free trial.

- Transparent Pricing : Say goodbye to complex and hidden pricing models. Hevo’s Transparent Pricing brings complete visibility to your ELT spend. Choose a plan based on your business needs. Stay in control with spend alerts and configurable credit limits for unforeseen spikes in data flow.

How to Modify Data Retention Period for Snowflake Objects?

When you alter a Table’s Data Retention Period, the new Retention Period affects all active data as well as any data in Time Travel. Whether you lengthen or shorten the period has an impact:

1) Increasing Retention

This causes the data in Snowflake Time Travel to be saved for a longer amount of time.

For example, if you increase the retention time from 10 to 20 days on a Table, data that would have been destroyed after 10 days is now kept for an additional 10 days before being moved to Fail-Safe. This does not apply to data that is more than 10 days old and has previously been put to Fail-Safe mode .

2) Decreasing Retention

- Temporal Travel reduces the quantity of time data stored.

- The new Shorter Retention Period applies to active data updated after the Retention Period was trimmed.

- If the data is still inside the new Shorter Period , it will stay in Time Travel.

- If the data is not inside the new Timeframe, it is placed in Fail-Safe Mode.

For example, If you have a table with a 10-day Retention Term and reduce it to one day, data from days 2 through 10 will be moved to Fail-Safe, leaving just data from day 1 accessible through Time Travel.

However, since the data is moved from Snowflake Time Travel to Fail-Safe via a background operation, the change is not immediately obvious. Snowflake ensures that the data will be migrated, but does not say when the process will be completed; the data is still accessible using Time Travel until the background operation is completed.

Use the appropriate ALTER <object> Command to adjust an object’s Retention duration. For example, the below command is used to adjust the Retention duration for a table:

How to Query Snowflake Time Travel Data?

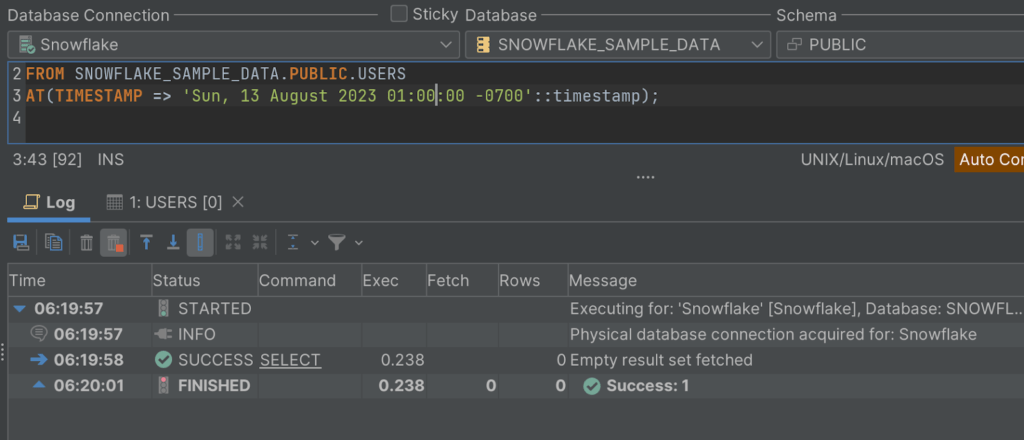

When you make any DML actions on a table, Snowflake saves prior versions of the Table data for a set amount of time. Using the AT | BEFORE Clause, you can Query previous versions of the data.

This Clause allows you to query data at or immediately before a certain point in the Table’s history throughout the Retention Period . The supplied point can be either a time-based (e.g., a Timestamp or a Time Offset from the present) or a Statement ID (e.g. SELECT or INSERT ).

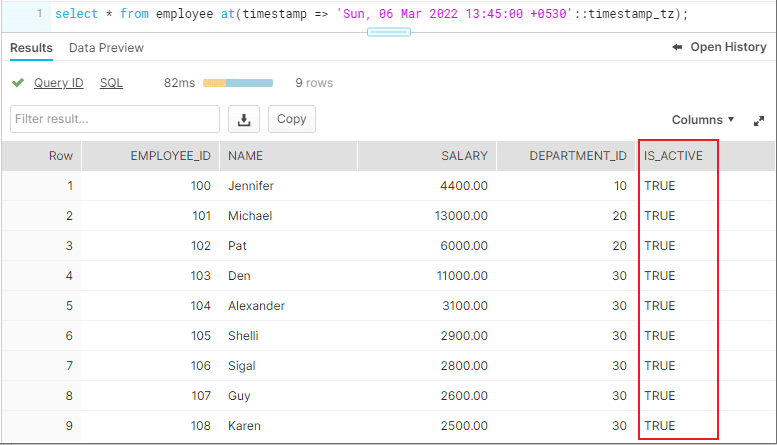

- The query below selects Historical Data from a Table as of the Date and Time indicated by the Timestamp:

- The following Query pulls Data from a Table that was last updated 5 minutes ago:

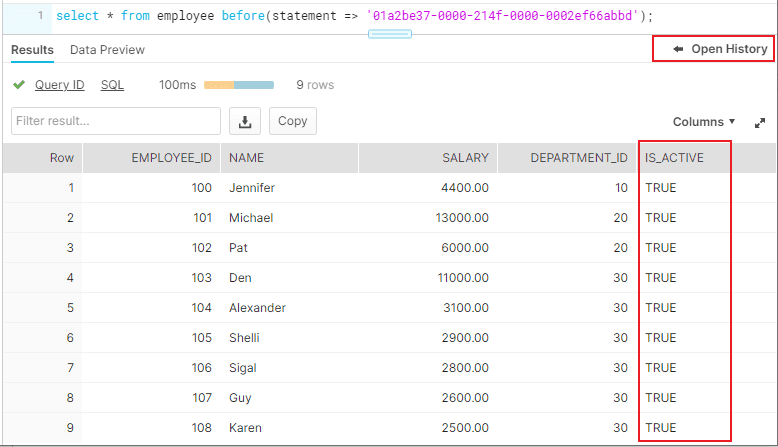

- The following Query collects Historical Data from a Table up to the specified statement’s Modifications, but not including them:

How to Clone Historical Data in Snowflake?

The AT | BEFORE Clause, in addition to queries, can be combined with the CLONE keyword in the Construct command for a Table, Schema, or Database to create a logical duplicate of the object at a specific point in its history.

Consider the following scenario:

- The CREATE TABLE command below generates a Clone of a Table as of the Date and Time indicated by the Timestamp:

- The following CREATE SCHEMA command produces a Clone of a Schema and all of its Objects as they were an hour ago:

- The CREATE DATABASE command produces a Clone of a Database and all of its Objects as they were before the specified statement was completed:

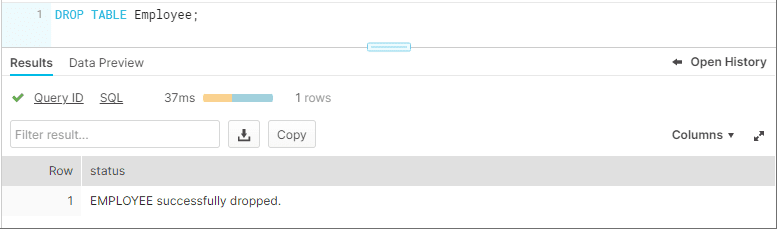

Using UNDROP Command with Snowflake Time Travel: How to Restore Objects?

The following commands can be used to restore a dropped object that has not been purged from the system (i.e. the item is still visible in the SHOW object type> HISTORY output):

- UNDROP DATABASE

- UNDROP TABLE

- UNDROP SCHEMA

UNDROP returns the object to its previous state before the DROP command is issued.

A Database can be dropped using the UNDROP command. For example,

Similarly, you can UNDROP Tables and Schemas .

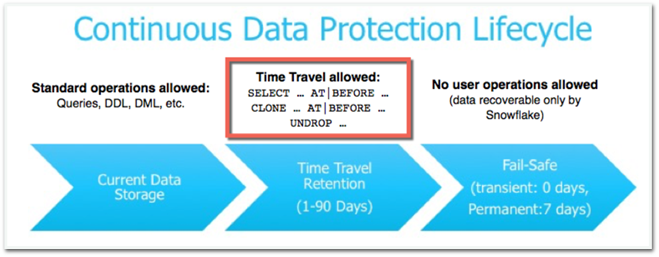

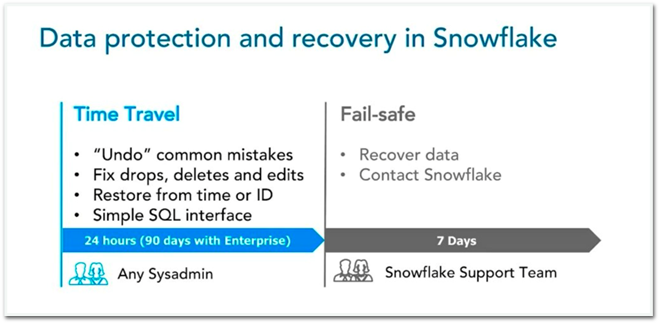

Snowflake Fail-Safe vs Snowflake Time Travel: What is the Difference?

In the event of a System Failure or other Catastrophic Events , such as a Hardware Failure or a Security Incident, Fail-Safe ensures that Historical Data is preserved . While Snowflake Time Travel allows you to Access Historical Data (that is, data that has been updated or removed) at any point in time.

Fail-Safe mode allows Snowflake to recover Historical Data for a (non-configurable) 7-day period . This time begins as soon as the Snowflake Time Travel Retention Period expires.

This article has exposed you to the various Snowflake Time Travel to help you improve your overall decision-making and experience when trying to make the most out of your data. In case you want to export data from a source of your choice into your desired Database/destination like Snowflake , then Hevo is the right choice for you!

However, as a Developer, extracting complex data from a diverse set of data sources like Databases, CRMs, Project management Tools, Streaming Services, and Marketing Platforms to your Database can seem to be quite challenging. If you are from non-technical background or are new in the game of data warehouse and analytics, Hevo can help!

Hevo will automate your data transfer process, hence allowing you to focus on other aspects of your business like Analytics, Customer Management, etc. Hevo provides a wide range of sources – 150+ Data Sources (including 40+ Free Sources) – that connect with over 15+ Destinations. It will provide you with a seamless experience and make your work life much easier.

Want to take Hevo for a spin? Sign Up for a 14-day free trial and experience the feature-rich Hevo suite first hand.

You can also have a look at our unbeatable pricing that will help you choose the right plan for your business needs!

Harsh comes with experience in performing research analysis who has a passion for data, software architecture, and writing technical content. He has written more than 100 articles on data integration and infrastructure.

No-code Data Pipeline for Snowflake

- Snowflake Commands

Hevo - No Code Data Pipeline

Continue Reading

Radhika Sarraf

Amazon Redshift Serverless: A Comprehensive Guide

Suraj Poddar

Amazon Redshift ETL – Top 3 ETL Approaches for 2024

Snowflake Features: 7 Comprehensive Aspects

I want to read this e-book.

Overview of Snowflake Time Travel

Consider a scenario where instead of dropping a backup table you have accidentally dropped the actual table (or) instead of updating a set of records, you accidentally updated all the records present in the table (because you didn’t use the Where clause in your update statement).

What would be your next action after realizing your mistake? You must be thinking to go back in time to a period where you didn’t execute your incorrect statement so that you can undo your mistake.

Snowflake provides this exact feature where you could get back to the data present at a particular period of time. This feature in Snowflake is called Time Travel .

Let us understand more about Snowflake Time Travel in this article with examples.

1. What is Snowflake Time Travel?

Snowflake Time Travel enables accessing historical data that has been changed or deleted at any point within a defined period. It is a powerful CDP (Continuous Data Protection) feature which ensures the maintenance and availability of your historical data.

Below actions can be performed using Snowflake Time Travel within a defined period of time:

- Restore tables, schemas, and databases that have been dropped.

- Query data in the past that has since been updated or deleted.

- Create clones of entire tables, schemas, and databases at or before specific points in the past.

Once the defined period of time has elapsed, the data is moved into Snowflake Fail-Safe and these actions can no longer be performed.

2. Restoring Dropped Objects

A dropped object can be restored within the Snowflake Time Travel retention period using the “UNDROP” command.

Consider we have a table ‘Employee’ and it has been dropped accidentally instead of a backup table.

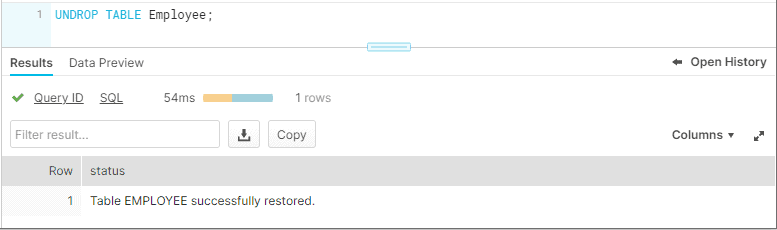

It can be easily restored using the Snowflake UNDROP command as shown below.

Databases and Schemas can also be restored using the UNDROP command.

Calling UNDROP restores the object to its most recent state before the DROP command was issued.

3. Querying Historical Objects

When unwanted DML operations are performed on a table, the Snowflake Time Travel feature enables querying earlier versions of the data using the AT | BEFORE clause.

The AT | BEFORE clause is specified in the FROM clause immediately after the table name and it determines the point in the past from which historical data is requested for the object.

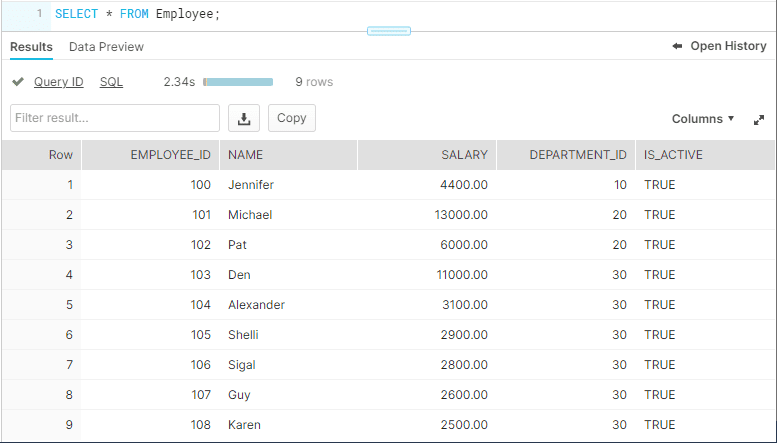

Let us understand with an example. Consider the table Employee. The table has a field IS_ACTIVE which indicates whether an employee is currently working in the Organization.

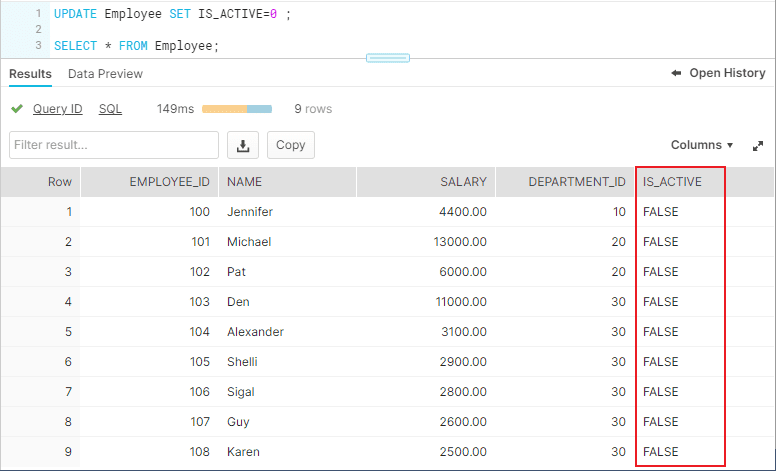

The employee ‘Michael’ has left the organization and the field IS_ACTIVE needs to be updated as FALSE. But instead you have updated IS_ACTIVE as FALSE for all the records present in the table.

There are three different ways you could query the historical data using AT | BEFORE Clause.

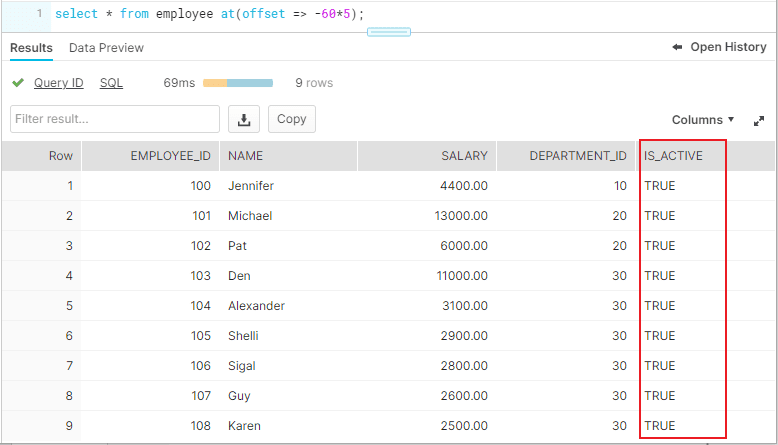

3.1. OFFSET

“ OFFSET” is the time difference in seconds from the present time.

The following query selects historical data from a table as of 5 minutes ago.

3.2. TIMESTAMP

Use “TIMESTAMP” to get the data at or before a particular date and time.

The following query selects historical data from a table as of the date and time represented by the specified timestamp.

3.3. STATEMENT

Identifier for statement, e.g. query ID

The following query selects historical data from a table up to, but not including any changes made by the specified statement.

The Query ID used in the statement belongs to Update statement we executed earlier. The query ID can be obtained from “Open History”.

4. Cloning Historical Objects

We have seen how to query the historical data. In addition, the AT | BEFORE clause can be used with the CLONE keyword in the CREATE command to create a logical duplicate of the object at a specified point in the object’s history.

The following queries show how to clone a table using AT | BEFORE clause in three different ways using OFFSET, TIMESTAMP and STATEMENT.

To restore the data in the table to a historical state, create a clone using AT | BEFORE clause, drop the actual table and rename the cloned table to the actual table name.

5. Data Retention Period

A key component of Snowflake Time Travel is the data retention period.

When data in a table is modified, deleted or the object containing data is dropped, Snowflake preserves the state of the data before the update. The data retention period specifies the number of days for which this historical data is preserved.

Time Travel operations can be performed on the data during this data retention period of the object. When the retention period ends for an object, the historical data is moved into Snowflake Fail-safe.

6. How to find the Time Travel Data Retention period of Snowflake Objects?

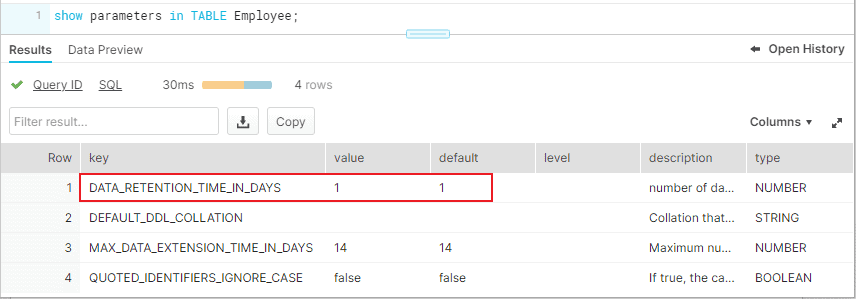

SHOW PARAMETERS command can be used to find the Time Travel retention period of Snowflake objects.

Below commands can be used to find the data retention period of data bases, schemas and tables.

The DATA_RETENTION_TIME_IN_DAYS parameters specifies the number of days to retain the old version of deleted/updated data.

The below image shows that the table Employee has the DATA_RETENTION_TIME_IN_DAYS value set as 1.

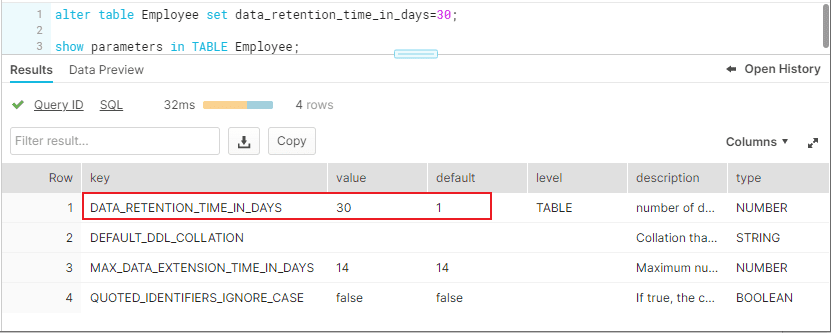

7. How to set custom Time-Travel Data Retention period for Snowflake Objects?

Time travel is automatically enabled with the standard, 1-day retention period. However, you may wish to upgrade to Snowflake Enterprise Edition or higher to enable configuring longer data retention periods of up to 90 days for databases, schemas, and tables.

You can configure the data retention period of a table while creating the table as shown below.

To modify the data retention period of an existing table, use below syntax

The below image shows that the data retention period of table is altered to 30 days.

A retention period of 0 days for an object effectively disables Time Travel for the object.

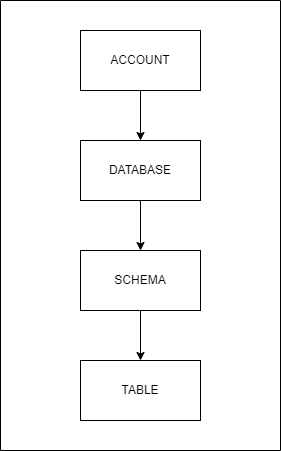

8. Data Retention Period Rules and Inheritance

Changing the retention period for your account or individual objects changes the value for all lower-level objects that do not have a retention period explicitly set. For example:

- If you change the retention period at the account level, all databases, schemas, and tables that do not have an explicit retention period automatically inherit the new retention period.

- If you change the retention period at the schema level, all tables in the schema that do not have an explicit retention period inherit the new retention period.

Currently, when a database is dropped, the data retention period for child schemas or tables, if explicitly set to be different from the retention of the database, is not honored. The child schemas or tables are retained for the same period of time as the database.

- To honor the data retention period for these child objects (schemas or tables), drop them explicitly before you drop the database or schema.

Related Articles:

Leave a Comment Cancel reply

Save my name, email, and website in this browser for the next time I comment.

Related Posts

QUALIFY in Snowflake: Filter Window Functions

GROUP BY ALL in Snowflake

Rank Transformation in Informatica Cloud (IICS)

Panic hits when you mistakenly delete data. Problems can come from a mistake that disrupts a process, or worse, the whole database was deleted. Thoughts of how recent was the last backup and how much time will be lost might have you wishing for a rewind button. Straightening out your database isn't a disaster to recover from with Snowflake's Time Travel. A few SQL commands allow you to go back in time and reclaim the past, saving you from the time and stress of a more extensive restore.

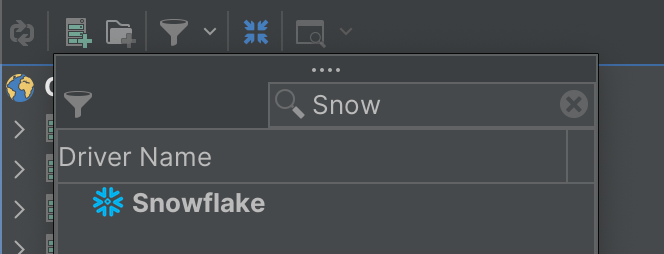

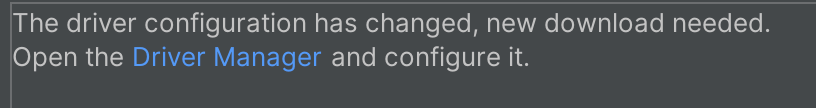

We'll get started in the Snowflake web console, configure data retention, and use Time Travel to retrieve historic data. Before querying for your previous database states, let's review the prerequisites for this guide.

Prerequisites

- Quick Video Introduction to Snowflake

- Snowflake Data Loading Basics Video

What You'll Learn

- Snowflake account and user permissions

- Make database objects

- Set data retention timelines for Time Travel

- Query Time Travel data

- Clone past database states

- Remove database objects

- Next options for data protection

What You'll Need

- A Snowflake Account

What You'll Build

- Create database objects with Time Travel data retention

First things first, let's get your Snowflake account and user permissions primed to use Time Travel features.

Create a Snowflake Account

Snowflake lets you try out their services for free with a trial account . A Standard account allows for one day of Time Travel data retention, and an Enterprise account allows for 90 days of data retention. An Enterprise account is necessary to practice some commands in this tutorial.

Login and Setup Lab

Log into your Snowflake account. You can access the SQL commands we will execute throughout this lab directly in your Snowflake account by setting up your environment below:

Setup Lab Environment

This will create worksheets containing the lab SQL that can be executed as we step through this lab.

Once the lab has been setup, it can be continued by revisiting the lab details page and clicking Continue Lab

or by navigating to Worksheets and selecting the Getting Started with Time Travel folder.

Increase Your Account Permission

Snowflake's web interface has a lot to offer, but for now, switch the account role from the default SYSADMIN to ACCOUNTADMIN . You'll need this increase in permissions later.

Now that you have the account and user permissions needed, let's create the required database objects to test drive Time Travel.

Within the Snowflake web console, navigate to Worksheets and use the ‘Getting Started with Time Travel' Worksheets we created earlier.

Create Database

Use the above command to make a database called ‘timeTravel_db'. The Results output will show a status message of Database TIMETRAVEL_DB successfully created .

Create Table

This command creates a table named ‘timeTravel_table' on the timeTravel_db database. The Results output should show a status message of Table TIMETRAVEL_TABLE successfully created .

With the Snowflake account and database ready, let's get down to business by configuring Time Travel.

Be ready for anything by setting up data retention beforehand. The default setting is one day of data retention. However, if your one day mark passes and you need the previous database state back, you can't retroactively extend the data retention period. This section teaches you how to be prepared by preconfiguring Time Travel retention.

Alter Table

The command above changes the table's data retention period to 55 days. If you opted for a Standard account, your data retention period is limited to the default of one day. An Enterprise account allows for 90 days of preservation in Time Travel.

Now you know how easy it is to alter your data retention, let's bend the rules of time by querying an old database state with Time Travel.

With your data retention period specified, let's turn back the clock with the AT and BEFORE clauses .

Use timestamp to summon the database state at a specific date and time.

Employ offset to call the database state at a time difference of the current time. Calculate the offset in seconds with math expressions. The example above states, -60*5 , which translates to five minutes ago.

If you're looking to restore a database state just before a transaction occurred, grab the transaction's statement id. Use the command above with your statement id to get the database state right before the transaction statement was executed.

By practicing these queries, you'll be confident in how to find a previous database state. After locating the desired database state, you'll need to get a copy by cloning in the next step.

With the past at your fingertips, make a copy of the old database state you need with the clone keyword.

Clone Table

The command above creates a new table named restoredTimeTravel_table that is an exact copy of the table timeTravel_table from five minutes prior.

Cloning will allow you to maintain the current database while getting a copy of a past database state. After practicing the steps in this guide, remove the practice database objects in the next section.

You've created a Snowflake account, made database objects, configured data retention, query old table states, and generate a copy of the old table state. Pat yourself on the back! Complete the steps to this tutorial by deleting the objects created.

By dropping the table before the database, the retention period previously specified on the object is honored. If a parent object(e.g., database) is removed without the child object(e.g., table) being dropped prior, the child's data retention period is null.

Drop Database

With the database now removed, you've completed learning how to call, copy, and erase the past.

How to Leverage the Time Travel Feature on Snowflake

Welcome to Time Travel in the Snowflake Data Cloud . You may be tempted to think “only superheroes can Time Travel,” and you would be right. But Snowflake gives you the ability to be your own real-life superhero.

Have you ever feared deleting the wrong data in your production database? Or that your carefully written script might accidentally remove the wrong records? Never fear, you are here – with Snowflake Time Travel!

What’s The Big Deal?

Snowflake Time Travel, when properly configured, allows for any Snowflake user with the proper permissions to recover and query data that has been changed or deleted up to the last 90 days (though this recovery period is dependent on the Snowflake version, as we’ll see later.)

This provides comprehensive, robust, and configurable data history in Snowflake that your team doesn’t have to manage! It includes the following advantages:

- Data (or even entire databases and schemas) can be restored that may have been lost due to a deletion, no matter if that deletion was on purpose or not

- The ability to maintain backup copies of your data for all past versions of it for a period of time

- Allowing for inspection of changes made over specific periods of time

To further investigate these features, we will look at:

- How Time Travel works

- How to configure Time Travel in your account

- How to use Time Travel

- How Time Travel impacts Snowflake cost

- Some Time Travel best practices

How Time Travel Works

Before we learn how to use it, let’s understand a little more about why Snowflake can offer this feature.

Snowflake stores the records in each table in immutable objects called micro-partitions that contain a subset of the records in a given table.

Each time a record is changed (created/updated/deleted), a brand new micro-partition is created, preserving the previous micro-partitions to create an immutable historical record of the data in the table at any given moment in time.

Time Travel is simply accessing the micro-partitions that were current for the table at a particular moment in time.

How To Configure Time Travel In Your Account

Time Travel is available and enabled in all account types.

However, the extent to which it is available is dependent on the type of Snowflake account, the object type, and the access granted to your user.

Default Retention Period

The retention period is the amount of time you can travel back and recover the state of a table at a given point and time. It is variable per account type. The default Time Travel retention period is 1 day (24 hours).

PRO TIP: Snowflake does have an additional layer of data protection called fail-safe , which is only accessible by Snowflake to restore customer data past the time travel window. However, unlike time travel, it should not be considered as a part of your organization’s backup strategy.

Account/Object Type Considerations

All Snowflake accounts have Time Travel for permanent databases, schemas, and tables enabled for the default retention period.

Snowflake Standard accounts (and above) can remove Time Travel retention altogether by setting the retention period to 0 days, effectively disabling Time Travel.

Snowflake Enterprise accounts (and above) can set the Time Travel retention period for transient databases, schemas, tables, and temporary tables to either 0 or 1 day. The retention period can also be increased to 0-90 days for permanent databases, schemas, and tables.

The following table summarizes the above considerations:

Changing Retention Period

For the Snowflake Enterprise accounts; two account level parameters can be used to change the default account level retention time.

- DATA_RETENTION_TIME_IN_DAYS: How many days that Snowflake stores historical data for the purpose of Time Travel.

- MIN_DATA_RETENTION_TIME_IN_DAYS: How many days at a minimum that Snowflake stores historical data for the purpose of Time Travel.

The parameter DATA_RETENTION_TIME_IN_DAYS can also be used at an object level to override the default retention time for an object and its children. Example:

How To Use Time Travel

Using Time Travel is easy! There are two sets of SQL commands that can invoke Time Travel capabilities:

- AT or BEFORE : clauses for both SELECT and CREATE .. CLONE statements. AT is inclusive and BEFORE is exclusive

- UNDROP : command for restoring a deleted table/schema/database

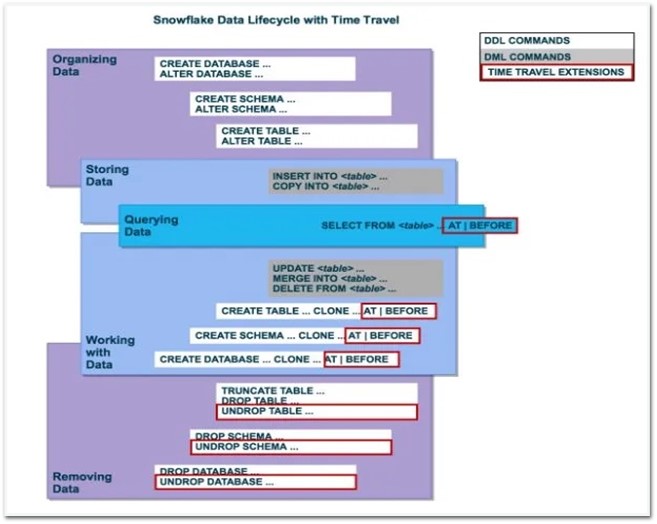

The following graphic from the Snowflake documentation summarizes this visually:

Query Historical Data

You can query historical data using the AT or BEFORE clauses and one of three parameters:

- TIMESTAMP : A specific historical timestamp at which to query data from a particular object. Example: SELECT * FROM my_table AT (TIMESTAMP => ‘Fri, 01 May 2015 15:00:00 -0700’::TIMESTAMP_TZ);

- OFFSET : The difference in seconds from the current time at which to query data from a particular object. Example: CREATE SCHEMA restored_schema CLONE my_schema AT (OFFSET => -4800);

- STATEMENT : The query ID of a statement that is used as a reference point from which to query data from a particular object. Example: CREATE DATABASE restored_db CLONE my_db BEFORE (STATEMENT => ‘8e5d0ca9-005e-44e6-b858-a8f5b37c5726’);

The one thing to understand is that these commands will work only within the retention period for the object that you are querying against. So, if your retention time is set to the default one day, and you try to UNDROP a table two days after deleting it, you receive an error and be out of luck!

PRO TIP: Snowflake does have an additional layer of data protection called fail-safe , which is only accessible by Snowflake to restore customer data past the time travel window. However, unlike time travel, it should not be considered as a part of your organization’s backup strategy.

Restore Deleted Objects

You can also restore objects that have been deleted by using the UNDROP command. To use this command, another table with the same fully qualified name (database.schema.table) cannot exist.

Example: UNDROP TABLE my_table

How Time Travel Impacts Snowflake Cost

Snowflake accounts are billed for the number of 24-hour periods that Time Travel data (the micro-partitions) is necessary to be maintained for the data that is being retained.

Every time there is a change in a table’s data, the historical version of that changed data will be retained (and charged in addition) for the entire retention period. This may not be an entire second copy of the table. Snowflake will try to optimize to maintain only the minimal amount of historical data needed but will incur additional costs.

As an example, if every row of a 100 GB table were changed ten times a day, the storage consumed (and charged) for this data per day would be 100GB x 10 changes = 1 TB.

What can you do to optimize cost to ensure your ops team does not wake up to an unnecessarily large Time Travel bill? Below are a couple of suggestions.

Use Transient and Temporary Tables When Possible

If data does not need to be protected using Time Travel, or there is data only being used as an intermediate stage in an ETL process, then take advantage of using transient and temporary tables with the DATA_RETENTION_TIME_IN_DAYS parameter set to 0. This will essentially disable Time Travel and make sure there are no extra costs because of it.

Copy Large High-Churn Tables

If you have large permanent tables where a high percentage of records are often changed every day, it might be a good idea to change your storage strategy for these tables based on the cost implications mentioned above.

One way of dealing with such a table would be to create it as a transient table with 0 Time Travel retention (DATA_RETENTION_TIME_IN_DAYS=0) and copy it over to a permanent table on a periodic basis.

This would allow you to control the number of copies of this data you maintain without worrying about ballooning Time Travel costs in the background.

Time Travel is an incredibly useful tool that removes the need for your team to maintain backups/snapshots/complex restoration processes/etc… as with a traditional database. Specifically, it enables the following advantages:

- Data recovery/restoration : use the ability to query historical data to restore old versions of a particular dataset, or recover databases/schemas/tables that have been deleted

- Backups : If not explicitly disabled, time travel automatically is maintaining backup copies of all past versions of your data for at least 1 day, and up to 90 days

- Change Auditing : The queryable nature of time travel allows for inspection of changes made to your data over specific periods of time

Final Thoughts

Hopefully, this has helped understand how to use Snowflake Time Travel and the context around how it works, and some of the cost implications.

If your organization needs help using or configuring Time Travel, or any other Snowflake feature, phData is a certified Elite Snowflake partner, and we would love to hear from you so that our team can help drive the value of your organization’s data forward!

More to explore

Import vs Direct Query: Here’s What You Need to Know

HVR for Snowflake Best Practices

How to Automate Document Processing with Snowflake’s Document AI

Join our team

- About phData

- Leadership Team

- All Technology Partners

- Case Studies

- phData Toolkit

Subscribe to our newsletter

- © 2023 phData

- Privacy Policy

- Accesibility Policy

- Website Terms of Use

- Data Processing Agreement

- End User License Agreement

Data Coach is our premium analytics training program with one-on-one coaching from renowned experts.

- Data Coach Overview

- Course Collection

Accelerate and automate your data projects with the phData Toolkit

- Get Started

- Financial Services

- Manufacturing

- Retail and CPG

- Healthcare and Life Sciences

- Call Center Analytics Services

- Snowflake Native Streaming of HL7 Data

- Snowflake Retail & CPG Supply Chain Forecasting

- Snowflake Plant Intelligence For Manufacturing

- Snowflake Demand Forecasting For Manufacturing

- Snowflake Data Collaboration For Manufacturing

- MLOps Framework

- Teradata to Snowflake

- Cloudera CDP Migration

Technology Partners

Other technology partners.

Check out our latest insights

- Dashboard Library

- Whitepapers and eBooks

Data Engineering

Consulting, migrations, data pipelines, dataops, change management, enablement & learning, coe, coaching, pmo, data science and machine learning services, mlops enablement, prototyping, model development and deployment, strategy services, data, analytics, and ai strategy, architecture and assessments, reporting, analytics, and visualization services, self-service, integrated analytics, dashboards, automation, elastic operations, data platforms, data pipelines, and machine learning.

Strategic Services

Digital Engineering Services

Managed Services

Harness the power of Generative AI

Amplify innovation, creativity, and efficiency through disciplined application of generative AI tools and methods.

Focus Industries

Healthcare & Life Sciences

Retail & CPG

Energy & Utilities

Banking, Financial Services & Insurance

Travel, Hospitality & Logistics

Telecom & Media

Explore Client Success Stories

We create competitive advantage through accelerated technology innovation. We provide the tools, talent, and processes needed to accelerate your path to market leadership.

Global Delivery with Encora

Nearshore in the Americas

Nearshore in Europe

Nearshore in Asia & Oceania

Expertise at Scale in India

Hybrid Global Teams

Experience the power of Global Digital Engineering with Encora.

Refine your global engineering location strategy with the speed of collaboration in Nearshore and the scale of expertise in India.

15+ other partnerships

Accelerating Innovation Cycles and Business Outcomes

Through strategic partnerships, Encora helps clients tap into the potential of the world’s leading technologies to drive innovation and business impact.

Featured Insights

Using Generative AI to Prevent Physician Burnout

Unlocking the Potential of Gen AI for the Automotive Industry

Narrowing Down a Use Case for Shared Loyalty in Travel

Exploring The Potential of Web3 for Shared Loyalty in Travel

Latest News

Press Releases

Encora Earns Kubernetes Specialization on Microsoft Azure, E...

Encora Ranks in India's Top 50 Workplaces in Health and Well...

Encora Attains AWS Cloud Operations Competency

Encora Secures Top Rankings Across Ten ER&D Segments in Zinn...

Open positions by country

Philippines

North Macedonia

Make a Lasting Impact on the World through Technology

Come Grow with Us

< Go Back

Time Travel in Snowflake

Talati adit anil.

June 01, 2023

Consider a scenario where you accidentally dropped the actual table or instead of deleting a set of records, you updated all the records present in the table. What will you do? How will you restore your data that has already been deleted/altered? You must be hoping of going back in time and correcting incorrectly executed statements. Snowflake provides this feature wherein you can get back the data that is present at a particular time. This feature of Snowflake is called Time Travel .

Introduction

Snowflake Time Travel is a very important tool that allows users to access Historical Data (i.e. data that has been updated or removed) at any point in time in the past. It is a powerful Continuous Data Protection (CDP) feature that ensures the maintenance and availability of historical data.

Key Features

- Query Optimization: As a user, we should not be concerned about optimizing queries because Snowflake on its own optimizes queries by using Clustering and Partitioning.

- Secure Data Sharing: Using Snowflake Database, Tables, Views, and UDFs, data can be shared securely from one account to another.

- Support for File Formats: Supports almost all file formats: JSON, Avro, ORC, Parquet, and XML are all Semi-Structured data formats that Snowflake can import. Column type — Variant lets the user store Semi-Structured data.

- Caching: Caching strategy of Snowflake returns results quickly for repeated queries as it stores query results in a cache within a given session.

- Fault Resistant: In case of event failure, Snowflake provides exceptional fault-tolerant capabilities to recover tables, views, databases, schema, and so on.

- To query past data.

- To make clones of complete Tables, Schemas, and Databases at or before certain dates.

- To restore deleted Tables, Schemas, and Databases.

- To restore original data that was updated accidentally.

- To check consumption over a period of time.

- Cloning and Backing up data from previous times.

How to Enable & Disable Time Travel in Snowflake?

Enable time travel.

No additional configurations are required to enable Time Travel, it is enabled by default, with a one-day retention period. Although to configure longer data retention periods, we need to upgrade to Snowflake Enterprise Edition. The retention period can be set to a maximum of 90 days. Based on the retention period, charges will increase. The below query builds a table with a retention period of 90 days:

The retention period can also be changed using the ‘alter’ query as below:

Disable Time Travel

Time Travel cannot be turned off for accounts, but it can be turned off for individual databases, schemas, and tables by setting data_retention_time_in_days field to 0 using the below query:

Query Time Travel Data

Whenever any Data Manipulation Language (DML) query is executed on a table, Snowflake saves prior versions of the Table data for a given period of time depending on the retention period. The previous version of data can be queried using the AT | BEFORE Clause. Using AT, the user can get data at a given period of time whereas using BEFORE all the data from that point till the end of the retention period can be fetched. The following SQL extensions have been added to facilitate Snowflake Time Travel:

- CLONE: To create a logical duplicate of the object at a specific point in its history.

- TIMESTAMP: From a given time (Data & Time) provided.

- OFFSET: Time difference from current time till offset provided in seconds.

- STATEMENT: Using a Statement ID from the point where the last DML query was fired.

- UNDROP: If a table is dropped accidentally, it can be restored using the UNDROP command.

The below query generates a Clone of a Table from the given Date and Time as indicated by the Timestamp:

The below query creates a Clone of a Schema and all its Objects as they were an hour ago:

The below query pulls Historical Data from a Table from a given Timestamp:

The below query pulls Historical Data from a Table that was updated 5 minutes ago:

The below query collects Historical Data from a Table up to the given statement’s Modifications (Statement ID):

The below query is used to restore Database EMP:

The following graphic from the Snowflake documentation summarizes all the above points visually:

Data Retention

Snowflake preserves the previous state of the data when DML operations are performed. By default, all Snowflake accounts have a standard retention duration of one day which is automatically enabled.

- For Snowflake Standard Edition, the Retention Period can be adjusted to 0 from default 1 day for all objects (Temporary & Permanent).

- For Snowflake Enterprise Edition (or higher) it gives more flexibility for setting retention period, that is The Retention Time for permanent Databases, Schemas, and Tables can be configured to any number between 0 and 90 days whereas for temporary objects it can be set to 0 from the default 1 day.

The below query sets a retention period of 90 days while creating the table:

Snowflake provides another exciting feature called Fail-safe where historical data can be protected in case of any failure. Fail-safe allows a maximum period of 7 days which begins after the Time Travel retention period ends wherein Historical data can be recovered. Recovering data through Fail-safe can take hours to days and it involves cost.

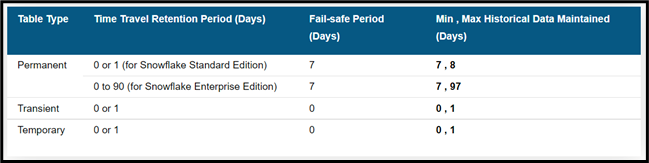

The number of days historical data is maintained is based on the table type and the Fail-safe period for the table. Transient and temporary tables have no Fail-safe period.

Storage fees are incurred for maintaining historical data during both the Time Travel and Fail-safe periods. The fees are calculated for each 24 hours (i.e. 1 day) from the time the data changed. The number of days historical data is maintained is based on the table type and retention period set for the table.

Snowflake minimizes the amount of storage required for historical data by maintaining only the information required to restore the individual table rows that were updated or deleted. As a result, storage usage is calculated as a percentage of the table that changed. In most cases, Snowflake does not keep a full copy of data. Only when tables are dropped or truncated, full copies of tables are maintained.

Temporary and Transient Tables

To manage the storage costs Snowflake provides two table types: TEMPORARY & TRANSIENT, which do not incur the same fees as standard (i.e. permanent) tables:

- Transient tables can have a Time Travel retention period of either 0 or 1 day.

- Temporary tables can also have a Time Travel retention period of 0 or 1 day; however, this retention period ends as soon as the table is dropped or the session in which the table was created ends.

- Transient and temporary tables have no Fail-safe period.

- The maximum additional fees incurred for Time Travel and Fail-safe by these types of tables are limited to 1 day.

The above table illustrates the different scenarios, based on table type.

hbspt.cta._relativeUrls=true;hbspt.cta.load(7958737, '1308a939-5241-47c3-bf0f-864090d8516d', {"useNewLoader":"true","region":"na1"});

Snowflake Time Travel is a powerful feature that enables users to examine data usage and manipulations over a specific time. Syntax to query with time travel is fairly the same as in SQL Server which is easy to understand and execute. Users can restore deleted objects, make duplicates, make a Snowflake backup, and recover historical data.

About Encora

Fast-growing tech companies partner with Encora to outsource product development and drive growth. Contact us to learn more about our software engineering capabilities.

Encora accelerates enterprise modernization and innovation through award-winning digital engineering across cloud, data, AI, and other strategic technologies. With robust nearshore and India-based capabilities, we help industry leaders and digital natives capture value through technology, human-centric design, and agile delivery.

Share this post

Table of Contents

Related insights.

5 Axioms to Improve Your Team Communication and Collaboration

Good communication within a team is key to keeping everyone on the right track. But it can be ...

JavaScript: setTimeout() and Promise under the Hood

In this blog, we will delve deeper into how setTimeout works under the hood.

Exponential Smoothing Methods for Time Series Forecasting

Recently, we covered basic concepts of time series data and decomposition analysis. We started ...

Innovation Acceleration

Headquarters - Scottsdale, AZ 85260 ©Encora Digital LLC

Global Delivery

Partnerships

Query Syntax

AT | BEFORE ¶

The AT or BEFORE clause is used for Snowflake Time Travel. In a query, it is specified in the FROM clause immediately after the table name and it determines the point in the past from which historical data is requested for the object:

The AT keyword specifies that the request is inclusive of any changes made by a statement or transaction with timestamp equal to the specified parameter.

The BEFORE keyword specifies that the request refers to a point immediately preceding the specified parameter.

For more information, see Understanding & Using Time Travel .

Specifies an exact date and time to use for Time Travel. The value must be explicitly cast to a TIMESTAMP.

Specifies the difference in seconds from the current time to use for Time Travel, in the form -N where N can be an integer or arithmetic expression (e.g. -120 is 120 seconds, -30*60 is 1800 seconds or 30 minutes).

Specifies the query ID of a statement to use as the reference point for Time Travel. This parameter supports any statement of one of the following types:

DML (e.g. INSERT, UPDATE, DELETE)

TCL (BEGIN, COMMIT transaction)

The query ID must reference a query that has been executed within the last 14 days. If the query ID references a query over 14 days old, the following error is returned:

To work around this limitation, use the timestamp for the referenced query.

Specifies the identifier (i.e. name) for an existing stream on the queried table or view. The current offset in the stream is used as the AT point in time for returning change data for the source object.

This keyword is supported only when creating a stream (using CREATE STREAM ) or querying change data (using the CHANGES clause). For examples, see these topics.

Usage notes ¶

Data in Snowflake is identified by timestamps that can differ slightly from the exact value of system time.

The value for TIMESTAMP or OFFSET must be a constant expression.

The smallest time resolution for TIMESTAMP is milliseconds.

If requested data is beyond the Time Travel retention period (default is 1 day), the statement fails.

In addition, if the requested data is within the Time Travel retention period but no historical data is available (e.g. if the retention period was extended), the statement fails.

If the specified Time Travel time is at or before the point in time when the object was created, the statement fails.

When you access historical table data, the results include the columns, default values, etc. from the current definition of the table. The same applies to non-materialized views. For example, if you alter a table to add a column, querying for historical data before the point in time when the column was added returns results that include the new column.

Historical data has the same access control requirements as current data. Any changes are applied retroactively.

Troubleshooting ¶

Select historical data from a table using a specific timestamp:

SELECT * FROM my_table AT ( TIMESTAMP => 'Fri, 01 May 2015 16:20:00 -0700' ::timestamp ); Copy SELECT * FROM my_table AT ( TIMESTAMP => TO_TIMESTAMP ( 1432669154242 , 3 )); Copy

Select historical data from a table as of 5 minutes ago:

SELECT * FROM my_table AT ( OFFSET => - 60 * 5 ) AS T WHERE T . flag = 'valid' ; Copy

Select historical data from a table up to, but not including any changes made by the specified transaction:

SELECT * FROM my_table BEFORE ( STATEMENT => '8e5d0ca9-005e-44e6-b858-a8f5b37c5726' ); Copy

Return the difference in table data resulting from the specified transaction:

SELECT oldt .* , newt .* FROM my_table BEFORE ( STATEMENT => '8e5d0ca9-005e-44e6-b858-a8f5b37c5726' ) AS oldt FULL OUTER JOIN my_table AT ( STATEMENT => '8e5d0ca9-005e-44e6-b858-a8f5b37c5726' ) AS newt ON oldt . id = newt . id WHERE oldt . id IS NULL OR newt . id IS NULL ; Copy

Snowflake Time Travel in a Nutshell

Yeah, the title is a bit clickbaity, so if you are too sensitive, please stop reading, because the article won’t explain to you all the details of the mentioned feature of Snowflake. But despite that, it will show some interesting things that are not mentioned in the documentation and it will help to answer at least one question in the certification exam. So, I think it’s worth giving it a few minutes of your time.

Snowflake is an advanced data platform provided as Software-as-a-Service (SaaS). It enables data storage, processing, and analytic solutions that are faster, easier to use, and far more flexible than traditional offerings. Snowflake isn’t a service built on top of Hadoop or Spark or any other “big data” technology, it is a completely new SQL query engine designed for the cloud and cloud-only. To the user, Snowflake provides all of the functionality of an enterprise analytic database, along with many additional special features and unique capabilities.

In this article, we won’t go into explaining what Snowflake is but will be more specific about one of the cool features of this data platform, which is Time Travel. Let me know in the comments if you want a brief overview of this product or maybe some explanation of its other features.

Time travel

Snowflake Time Travel enables to query data as it was saved at a particular point in time and roll back to the corresponding version. It means that the intentional or unintentional changes to the underlying data can be reverted. Time Travel is a very powerful feature that allows:

- Query data in the past that has since been updated or deleted.

- Create clones of entire tables, schemas, and databases at or before specific points in the past.

- Restore tables, schemas, and databases that have been dropped.

To support Time Travel, the following SQL extensions have been implemented:

- OFFSET (time difference in seconds from the present time)

- STATEMENT (identifier for statement, e.g. query ID)

- UNDROP command for tables, schemas, and databases.

A key component of Snowflake Time Travel is the data retention period. When data in a table is modified, including deletion of data or dropping an object containing data, Snowflake preserves the state of the data before the update. The data retention period specifies the number of days for which this historical data is preserved and, therefore, Time Travel operations (SELECT, CREATE … CLONE, UNDROP) can be performed on the data.

By default, the data retention period is set to 1 day and Snowflake recommends keeping this setting as is to be able to prevent unintentional data modifications. This period also can be extended up to 90 days, but keep in mind that Time Travel incurs additional storage costs. Setting the data retention period to 0 will disable Time Travel. This feature can be enabled/disabled on account, database, schema or table level.

After the retention period expired the data is moved into Snowflake Fail-Safe and cannot be restored by the regular user. Only Snowflake Support can restore the data in Fail-Safe.

Querying historical data

When any DML operations are performed on a table, Snowflake retains previous versions of the table data for a defined period of time. This enables querying earlier versions of the data using the AT | BEFORE clause. Now let’s see the examples. For the sake of this article, we will create a separate DB in Snowflake, a table and fill it with some seed values. Here we go:

And we have our seed records. Now let’s add some duplicates.

Having duplicates in our table isn’t a good idea, so we can use Time Travel to see how the data was looking before dups appeared. As was mentioned before there are few different methods to do that, let’s check them all.

As you can see we are back to the first version of the data! Next queries will return the same result.

Also, it is possible to restore the data before the change happened by finding the Query ID that introduced mentioned change. We can find it on the “Query History” tab in “Activity”.

Cloning historical data

There is another feature in Snowflake that is worth investigating – Zero-Copy Cloning. Basically, it creates a copy of a database, schema or table. A snapshot of data present in the source object is taken when the clone is created and is made available to the cloned object. The cloned object is writable and is independent of the clone source. That is, changes made to either the source object or the clone object are not part of the other. Cloning in Snowflake is zero-copy cloning, meaning that at the time of clone creation the data is not being copied and the newly created cloned table references the existing data partitions of the mother table. But it is worth another article, so at the moment brief intro and later we will dig deeper.

Cloning with Time Travel works using the same parameters:

The results of SELECTs are the same:

Dropping and Undropping

When a table, schema, or database is dropped, it is not immediately overwritten or removed from the system. Instead, it is retained for the data retention period for the object, during which time the object can be restored.

To drop a table, schema, or database, the following commands are used:

- DROP SCHEMA

- DROP DATABASE

To undrop a table, schema, or database:

- UNDROP TABLE

- UNDROP SCHEMA

- UNDROP DATABASE

This is actually where the fun comes. Let’s start with a simple example and then go into the woods.

Simple example:

The dropped tables can be seen by using the command:

In the results of the SHOW TABLES HISTORY in the column “ dropped_on ” the last time the table was dropped will be shown. If the value for the corresponding table in this column is NULL it means that the table is up and running. Dropping and undropping the same table multiple times will not create additional records in this view, only the timestamp in “ dropped_on ” will be updated.

But if a table is dropped and then a new table is created with the same name, the UNDROP command will fail, stating that the table exists.

As you can see in the screenshot above there are two tables restored_table_2, but one of them has a timestamp value in the column “dropped_on”. This is because the first time I ran the query to create this table I used the wrong schema, so I ran CREATE OR REPLACE TABLE … command, which actually dropped a wrong table and created a new one with the same name. So if now I try to UNDROP the old table with wrong records, the query will fail as stated earlier.

But it doesn’t mean that the data from the previous table is lost. As we saw earlier, we still can see 2 tables in SHOW TABLES HISTORY. In order to restore the original (or in this case wrong) table, the newly created table has to be renamed:

And now the UNDROP will work.

And we can see the wrong records I added to this table:

Now, I hope you won’t do such a thing, but here we saw what happens if you drop a table and then create a totally new one with the same name and still we can recover an old table. So what happens if you drop this new table and then create again a new one again with the same name? Will you be able to recover the data from the most ancient table? The answer is yes. It will take some effort, but it is possible. This is how our SHOW TABLES HISTORY looks like right now, we have 5 tables, and all are up and running:

Let’s drop restored_table and create it again using the same command and add a few new records to it:

And now we go further and drop again restored_table and create a new one.

To restore the first table that was dropped we will need to go through a set of renamings. Our original table had 4 rows. Let’s go. Restore the second table.

Restore the original table and rename it to v1

And here we are, with all the versions of our data restored. As you can see in Snowflake it becomes obsolete the creation of different versions of the tables as you can always go back in time, but be careful with the data retention period – if it’s expired you cannot get your data back that easily.

In the end, Time Travel is a very powerful tool for:

- Restoring data-related objects (tables, schemas, and databases) that might have been accidentally or intentionally deleted.

- Duplicating or backing up data from key points in the past.

- Analyzing data usage/manipulation over specified periods of time.

Hope you find it useful 😉 see ya in the next one 😉

Photo by Maddy Baker on Unsplash

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Sergi's Blog Cookies Policy

This Website uses cookies to improve your experience. Please visit the Cookies Policy page for more information about cookies and how we use them.

Hire Software Developers

Create a dedicated team of developers, designers, and IT consultants with the right skills and experience.

Trending Tech

- Fitness App Development

- Fintech Software Development

- Manufacturing

- Media & Entertainment

- Advertising

- Energy & Utilities

- Supply Chain & Logistics

- Travel And Hospitality

Building Next-Gen Solutions

Evolve business models, embrace innovation, improve the bottom line with industry-leading solutions.

- Custom ERP Software

- Learning Management System

- Enterprise CRM Solutions

- Enterprise Service & Maintenance

- Mobile Sales Force Automation (SFA)

- Help Desk Management System

- Spot Billing System

- Warehouse Management System

- Laundry Management System

- Vehicle Tracking System (VTS)

Unlock Digital Potential

Our business solutions maximize profits, accelerate growth, encourage innovation, and reduce costs.

- Case Studies

Browse through our software development success stories with tangible results.

- Live BI Visualization

Take a look at our diverse BI visualizations that convert raw data into actionable insights.

Explore our global, industry-wide, and tailored digital solutions that showcase our skills.

- Testimonials

- Project Execution Models

- Cookies Policy

- Corporate Environment & Social Responsibility

- Engagement Models

- Join Our Team

- Life @ SPEC

- Privacy Policy

- Hire Dedicated Developers

- Hire Power BI Developer

- Hire BI Developer

- Hire UI/UX Designer

- Hire Software Tester

- Hire Mobile App Developers

- Hire iOS Developer

- Hire Android Developer

- Hire Kotlin Developers

- Hire Flutter Developer

- Hire React Native Developer

- Hire Backend Developer

- Hire Java Developer

- Hire .Net Developer

- Hire Node JS Developer

- Hire PHP Developer

- Hire Laravel Developer

- Hire Front End Developer

- Hire JavaScript Developer

- Hire VueJS Developer

- Hire AngularJS Developer

- Hire Reactjs Developers

- Hire Full Stack Developer

Snowflake Time Travel: An In-depth Guide

October 18, 2023

March 19th, 2024

In the constantly evolving field of data management, Snowflake Database, a cloud-based database, has risen as a prominent player, renowned for its innovative features and robust capabilities. Among its array of features, one that shines particularly bright is the “Time Travel” feature, enabling users to journey through time and delve into the historical snapshots of their data.

In this blog post, we will embark on a voyage through Snowflake’s Time Travel feature, unveiling its importance, applications, and how it can empower businesses to make data-driven decisions like never before.

Snowflake Time Travel: An Overview

Imagine possessing the ability to turn back time and revisit your data precisely as it existed at any specific moment in the past. This is precisely what Snowflake’s Time Travel feature provides. It’s a distinctive data management capability that allows you to query and analyze your data at various points in time, all without the need for intricate ETL processes or additional storage. Essentially, this feature transforms your data warehouse into a time machine, enabling you to effortlessly explore the evolution of your data.

Key Aspects of Snowflake Time Travel:

Data versioning:.

Snowflake continuously maintains multiple versions of data, allowing users to access and query data at any specific historical point in time.

Granular Control:

Users can specify a timestamp or a range of times for which they want to access historical data. This granular control ensures precision.

No Data Duplication:

Snowflake doesn’t create duplicate copies of data for different time points, which saves storage costs.

Zero Data Loss:

It guarantees zero data loss, as all changes to data are tracked and stored.

Point-in-Time Queries:

Users can run SQL queries on historical data to analyze trends, troubleshoot issues, or recover accidentally deleted data.

Data Recovery:

Snowflake Time Travel is useful for data recovery, audit trails, and compliance requirements, ensuring data integrity.

Time-Travel Cloning:

Users can create a clone of a database at a specific time point for testing or analytical purposes without affecting the production data.

Good Read: Snowflake Developer: Role, Responsibilities, Skills, Salary and More

Pros of Time Travel in Snowflake

Historical data analysis:.

Snowflake’s Time Travel feature allows you to analyze data at different points in time, which is invaluable for historical trend analysis. This can provide insights into how your data has evolved and help you make data-driven decisions.

In the event of accidental data changes or deletions, Time Travel lets you revert to a previous timestamp, ensuring data integrity and minimizing downtime.

Auditing and Compliance:

Time Travel simplifies compliance and auditing processes by enabling you to track changes to data over time. This is particularly useful for meeting regulatory requirements.

Testing and Debugging:

Developers can use Time Travel to recreate and debug issues by analyzing data as it was during a problematic event. This can streamline troubleshooting and improve software quality.

Cloning data at critical points in time allows for data versioning, making it easier to manage and reference the historical states of data objects.

Ease of Use:

Snowflake’s implementation of Time Travel is user-friendly, requires minimal setup and configuration, and doesn’t involve complex ETL processes or additional storage.

Cons of Time Travel in Snowflake

Storage costs:.

Retaining historical data can lead to increased storage costs. Storing multiple versions of data, especially for large datasets, can impact your organization’s cloud storage expenses.

Query Performance:

The size of your dataset can affect query performance when using Time Travel. Querying historical data may be slower compared to querying the most recent data, and it may require additional optimization efforts.

Resource Consumption:

Running queries on historical data can consume computational resources. Organizations need to monitor and allocate sufficient resources to accommodate Time Travel queries without impacting other workloads.

Data Privacy:

Retaining historical data could potentially raise data privacy and security concerns, as older data may contain sensitive information that needs to be handled carefully.

How to implement Time Travel: Snowflake Time Travel Example

Snowflake employs a straightforward yet ingenious mechanism to enable time travel. It utilizes a blend of metadata and data storage, where each data object is accompanied by associated metadata that captures its historical context. Here’s how it works:

Time Travel Queries work on the following parameters.

- AS OF TIMESTAMP: Query data as it appeared at a specific timestamp.

- AS OF STATEMENT: Query data as it appeared before the given statement id

- FOR SYSTEM_TIME: Specify a time range and examine changes during that period.

Also, the Time Travel feature helps to create object cloning: If you intend to preserve particular historical data for reporting or analysis, you have the option to clone databases, schemas, or tables at a specific timestamp. This process generates a new object that holds the data exactly as it existed at the selected timestamp.

Below are some examples of SQL Statement help to recover historical or deleted data

SELECT * FROM your_table AS OF TIMESTAMP 'YYYY-MM-DD HH:MI: SS'

SELECT * FROM your_table FOR SYSTEM_TIME BETWEEN 'start_timestamp' AND 'end_timestamp';

CREATE OR REPLACE TABLE your_cloned_table CLONE your_source_table AS OF TIMESTAMP 'YYYY-MM-DD HH:MI: SS';

Moreover, the Time Travel feature also helps to undo or go back in the past and recover a deleted table, schema, and database provided the data retention period is not set to 0

For example,

UNDROP TABLE table_name UNDROP DATABASE db_name

- Managing Historical Data Retention

Snowflake employs a retention period that defines how far back in time you can travel. This period is customizable to suit your organizational needs, ensuring that historical data remains accessible within the specified timeframe.

The retention period can be checked in the Snowflake account’s data retention policy settings,

For Standard Edition, the retention period is 1 day which is enabled by default, and For Enterprise Edition up to 90 days. But, the Data Retention period is only 1 day for Transient and temporary tables whereas, it could be 0 to 90 days for Permanent tables depending on the snowflake edition.

Also, Data retention can be set at the account and object level (database, schemas, and tables)

Here is one example, Object parameter

“DATA_RETENTION_TIME_IN_DAYS” can be used to set a retention period of 50 days for a snowflake table and database.

— Database with a retention period of 50 days

CREATE DATABASE my_database DATA_RETENTION_TIME_IN_DAYS = 50;

— Table with a retention period of 50 days

CREATE TABLE my_table ( list of columns) DATA_RETENTION_TIME_IN_DAYS = 50;

Note: When the retention period over data is moved into Snowflake Fail-safe then:

- Data unavailable for querying

- Object cloning is not possible.

- Objects that can’t be restored were dropped.

Good Read: Snowflake vs Redshift: Revolutionizing Cloud-based Data Warehousing

Snowflake Time Travel: The Final Summary

Snowflake’s Time Travel feature is a transformative innovation in the realm of data warehousing and analytics . It empowers organizations to harness the historical context of their data, facilitating improved decision-making, enhanced compliance, and superior data management capabilities.

By offering a seamless and efficient method to navigate through time, Snowflake ensures that your data remains a valuable asset, not only in the present but throughout its entire lifecycle.

Nevertheless, it does come with some limitations, such as potential cost escalation, increased storage requirements, and possible performance impacts. Therefore, when working with a cloud database, it is always advisable to adhere to best practices to minimize unnecessary expenses.

SPEC INDIA, as your single stop IT partner has been successfully implementing a bouquet of diverse solutions and services all over the globe, proving its mettle as an ISO 9001:2015 certified IT solutions organization. With efficient project management practices, international standards to comply, flexible engagement models and superior infrastructure, SPEC INDIA is a customer’s delight. Our skilled technical resources are apt at putting thoughts in a perspective by offering value-added reads for all.

Delivering Digital Outcomes To Accelerate Growth

Table of contents.

- Snowflake Time Travel

- Data Versioning

- Granular Control

- No Data Duplication

- Zero Data Loss

- Point-in-Time Queries

- Data Recovery

- Time-Travel Cloning

- Historical Data Analysis

- Auditing and Compliance

- Testing and Debugging

- Ease of Use

- Storage Costs

- Query Performance

- Resource Consumption

- Data Privacy

- How to implement Time Travel

- The Final Summary

Related Blogs

Streamlining Data Comparison in Power BI with Excel Integration

Snowflake Private Data Sharing: A Detailed Guide

Business Intelligence vs Data Analytics: Comparing Insights

Power BI Projects: Deploy and Develop Reports with Git Integration

This website uses cookies to ensure you get the best experience on our website. Learn more

Use Kanaries Cloud for free as students and educators

Snowflake Time Travel: Clearly Explained

Published on 7/24/2023

In the realm of cloud computing and big data analytics , Snowflake has emerged as a leading data warehouse solution. Its architecture, designed for the cloud, provides a flexible, scalable, and easy-to-use platform for managing and analyzing data. One of the standout features of Snowflake is Time Travel . This feature is not just a novelty; it's a powerful tool that can significantly enhance your data management capabilities.

Snowflake Time Travel is a feature that allows you to access historical data within a specified time frame. This means you can query data as it existed at any point in the past, making it an invaluable tool for data retention and data governance . Whether you're auditing data changes, complying with data regulations, or recovering from a data disaster, Time Travel has you covered.

Want to quickly visualize your snowflake data? Use RATH (opens in a new tab) to easily turn your Snowflake database into interactive visualizations! RATH is an AI-powered, automated data analysis and data visualization tool that is supported by a passionate Open Source community. check out RATH GitHub (opens in a new tab) for more. Here is how you can visualize Snowflake data in RATH:

Learn more about how to visualize Snowflake Data in RATH Docs .

Understanding Snowflake Time Travel